LLM-as-a-judge in Langfuse

Using LLM-as-a-judge (model-based evaluations) has proven as a powerful evaluation tool next to human annotation. Via Langfuse, we support setting up LLM-evaluators to evaluate your LLM applications integrated with Langfuse. These evaluators are used to score a specific session/trace/LLM-call in Langfuse on criteria such as correctness, toxicity, or hallucinations.

Langfuse supports two types of model-based evaluations:

LLM-as-a-judge via the Langfuse UI (beta)

- HobbyPublic Beta

- ProPublic Beta

- TeamPublic Beta

- Self HostedNot Available

Store an API key

To use Langfuse LLM-as-a-judge, you have to bring your own LLM API keys. To do so, navigate to the settings page and insert your API key. We store them encrypted on our servers.

Create an evaluator

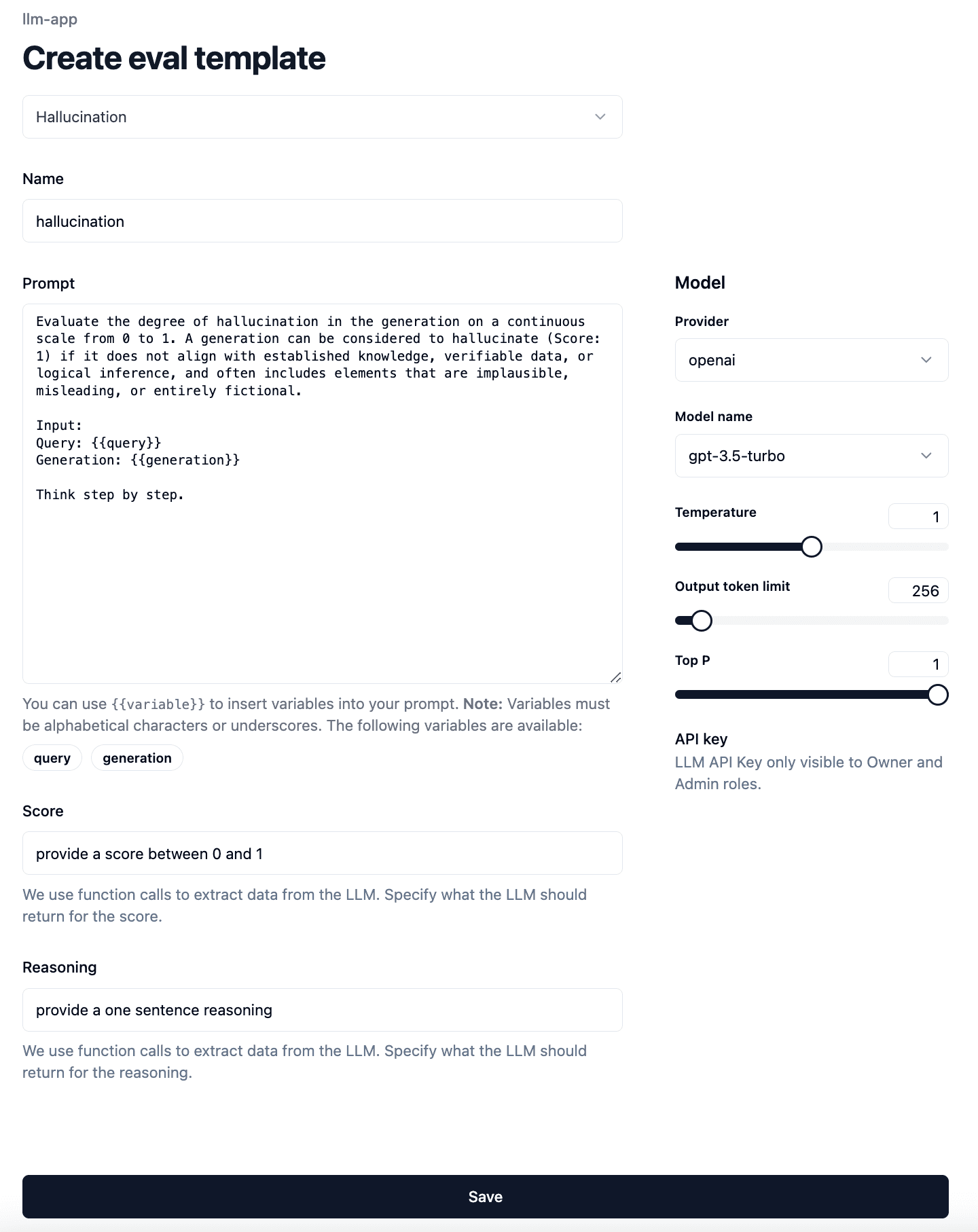

To set up an evaluator, we first need to configure the following:

- Select the model and its parameters.

- Select the evaluation prompt. Langfuse offers a set of managed prompts, but you can also write your own.

- We use function calling to extract the evaluation output. Specify the descriptions for the function parameters

scoreandreasoning. This is how you can direct the LLM to score on a specific range and provide specific reasoning for the score.

We store this information in an so-called evaluator template, so you can reuse it for multiple evaluators.

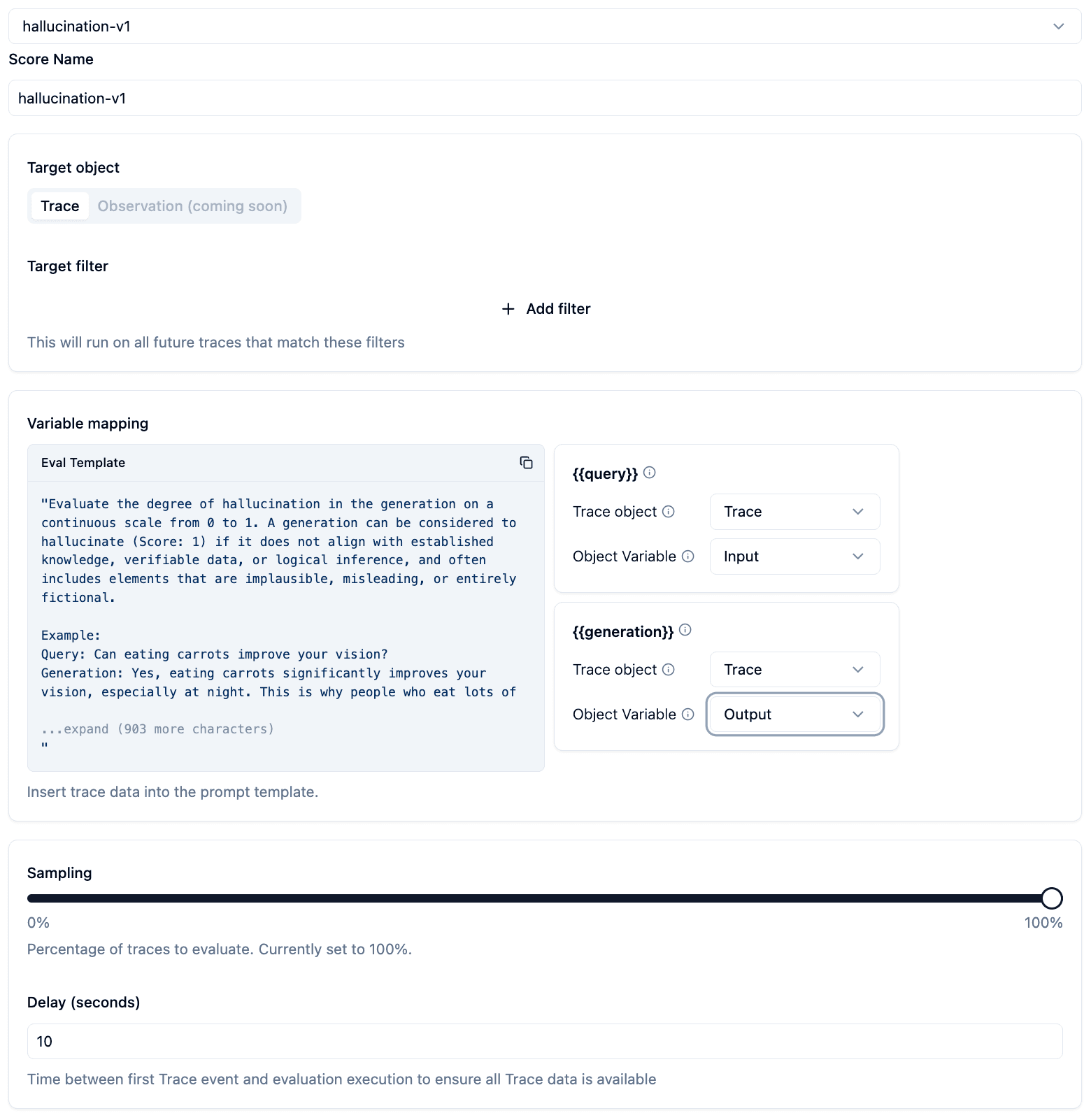

Second, we need to specify on which traces Langfuse should run the evaluator.

- Specify the name of the

scoreswhich will be created as a result of the evaluation. - Filter which newly ingested traces should be evaluated. (Coming soon: select existing traces)

- Specify how Langfuse should fill the variables in the template. Langfuse can extract data from

trace,generations,spans, oreventobjects which belong to a trace. You can choose to takeInput,Outputormetadatafrom each of these objects. Forgenerations,spans, orevents, you also have to specify the name of the object. We will always take the latest object that matches the name. - Reduce the sampling to not run evaluations on each trace. This helps to save LLM API cost.

- Add a delay to the evaluation execution. This is how you can ensure all data arrived at Langfuse servers before evaluation is executed.

See the progress

Once the evaluator is saved, Langfuse will start running evaluations on the traces that match the filter. You can view logs on the log page, or view each evaluator to see configuration and logs.

See scores

Upon receiving new traces, navigate to the trace detail view to see the associated scores.

Custom evaluators via external evaluation pipeline

- HobbyFull

- ProFull

- TeamFull

- Self HostedFull

You can run your own model-based evals on data in Langfuse by fetching traces from Langfuse (e.g. via the Python SDK) and then adding evaluation results as scores back to the traces in Langfuse. This gives you full flexibility to run various eval libraries on your production data and discover which work well for your use case.

The example notebook is a good template to get started with building your own evaluation pipeline.